- research workflow

- 4 min read

- By George Burchell

- View publications on PubMed

- ORCID

When Research Gets Stuck, It’s Rarely About Intelligence

I’ve lost count of how many times someone has told me they feel “stuck” in their research and immediately blamed themselves. They’ll say things like:

- “I must be missing something.”

- “Maybe I’m just not cut out for this.”

- “Everyone else seems to manage it.”

In reality, most of the time, the problem isn’t the researcher. It’s the system they’re being asked to work inside.

One moment that really stuck with me involved a researcher deep into a systematic review who had hit a wall at the data extraction stage. They weren’t necessarily underprepared; they were well into the work and completely bogged down.

What “Stuck” Actually Looked Like

Before we changed anything, their day-to-day reality looked familiar to anyone who’s done this kind of work. Dozens of PDFs open at once, outcome measures reported inconsistently across studies, endless copy-and-paste into Excel, and constant second-guessing whether something had been missed.

And underneath all of that, there's a quiet but persistent anxiety that sounds like: what if I’ve overlooked something important?

Nothing about this was a lack of effort or capability. These people are very careful, thoughtful, and methodologically aware. But the way the work was set up meant they were doing two very different jobs at the same time:

- High-precision, repetitive admin

- Deep interpretive, methodological thinking

That’s a tough combination for any human brain to carry for long.

My Belief: These Aren’t Intelligence Problems

Over time, I’ve become fairly firm on this belief:

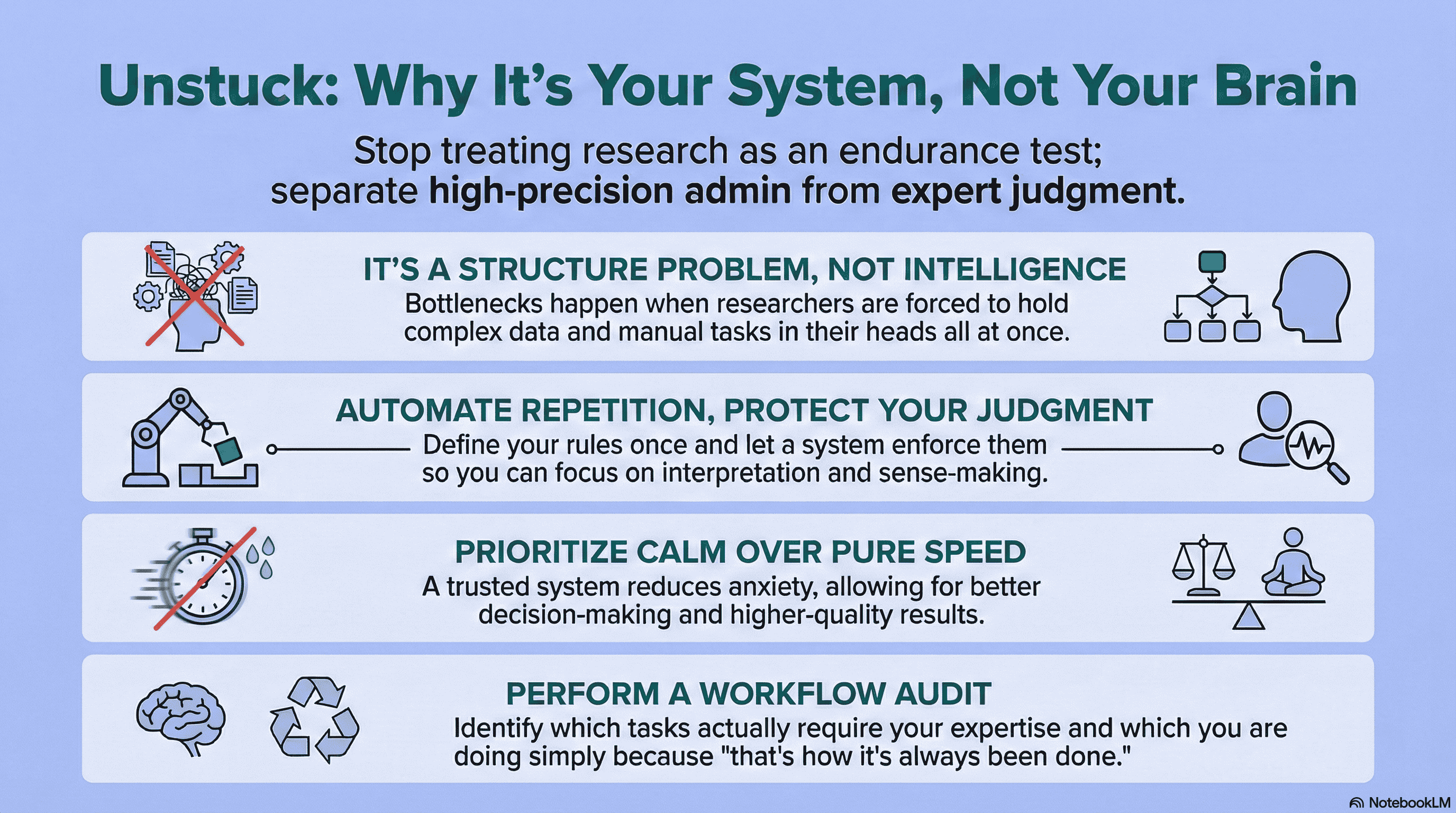

Most research bottlenecks are structure problems.

We often ask researchers to:

- Hold complex inclusion criteria in their head

- Apply those criteria consistently across dozens or hundreds of papers

- Manually extract and format data

- Maintain methodological rigour

- And stay confident they haven’t made an error

All at once!

That’s not a good division of labour. Humans are excellent at judgement, interpretation, and sense-making. We’re far less reliable when it comes to repetitive, high-precision tasks done at scale, especially under time pressure.

When the structure is weak, even very smart people start to doubt themselves. That gap between “powerful” and “usable” is something I unpack in Powerful Isn’t the Same as Usable.

What Changed When Structure Took Over

The real shift for this researcher didn’t come from working harder or being more disciplined. It came from changing how the work was organised.

Instead of wrestling with PDFs and spreadsheets, the process became much simpler in principle: define what matters once, apply it consistently everywhere, let the system enforce that structure.

Once the rules were set clearly, they didn’t need to be re-decided on every paper. The system handled the repetition and the researcher handled the thinking.

What surprised them most wasn’t just the time saved, although that was significant. It was a sense of relief. They stopped feeling like they were constantly second-guessing every decision.

They could see, clearly, what had been captured and why. The work felt calmer.

Why Calm Matters More Than Speed

Speed gets talked about a lot in research tooling. It’s all about faster screening, extractions, and synthesis. But in this case, speed wasn’t the most important outcome.

Being calm was.

Once the researcher trusted the process, they trusted themselves again. Finally, they weren’t rushing or feeling anxious. That is very important, because calm researchers make better decisions.

They’re able to spot patterns more easily, interpret results more carefully, and they’re less likely to cut corners out of fatigue or frustration. Good research comes from conditions that allow intelligence to be used well.

The Hidden Cost of “That’s How It’s Always Been Done”

One of the biggest blockers I see is habit.

A lot of researchers are doing parts of their workflow because that’s how the process has historically been done. This includes manual copy-pasting, overloaded spreadsheets, and re-interpreting the same criteria again and again.

When you step back and look at it honestly, much of that work doesn’t benefit from human judgement at all. It just consumes it and can lead to fatigue. And once fatigue sets in, confidence usually follows it out the door.

A Question Worth Sitting With

Whenever someone tells me they’re stuck in their workflow, I tend to ask: which part of your process actually requires your expertise, and which part are you doing just because that’s how it’s always been done? For a step-by-step way into a clearer process, our Quick Start Guide is built around that separation.

It’s not an easy question. It often brings up uncomfortable answers. But it’s usually the start of meaningful change.

Once you separate judgement from repetition, research starts to feel like research again, instead of an endurance test.

And that’s when people stop feeling stuck.

About the Author

Connect on LinkedInGeorge Burchell

George Burchell is a specialist in systematic literature reviews and scientific evidence synthesis with significant expertise in integrating advanced AI technologies and automation tools into the research process. With over four years of consulting and practical experience, he has developed and led multiple projects focused on accelerating and refining the workflow for systematic reviews within medical and scientific research.