- research software usability

- 5 min read

- By George Burchell

- View publications on PubMed

- ORCID

The Hardest Lesson I Learned in 2025: Powerful Isn’t the Same as Usable

If you’d asked me at the start of 2025 what mattered most when building research software, I’d have given you a fairly confident answer that it was all about accuracy, capability, and technical robustness for me.

All true and all important. But they weren’t the lesson.

The hardest lesson I learned this year was that building something powerful is not the same as building something usable.

That realisation didn’t come from a dramatic failure or a big system breaking. It came quietly, through watching smart people struggle in ways they shouldn’t have had to.

Watching Smart People Get Stuck

What sparked this lesson was seeing genuinely capable researchers hesitate when using tools I knew were technically sound. The software worked, the logic was right, and the outputs were also correct.

And yet people paused and second-guessed themselves. Some people quietly dropped off altogether.

At first, it’s tempting to explain this away. Maybe they needed more training or they weren’t used to the workflow yet. But that wasn’t it. They were thoughtful, cautious, evidence-driven researchers; exactly the people the tools were built for.

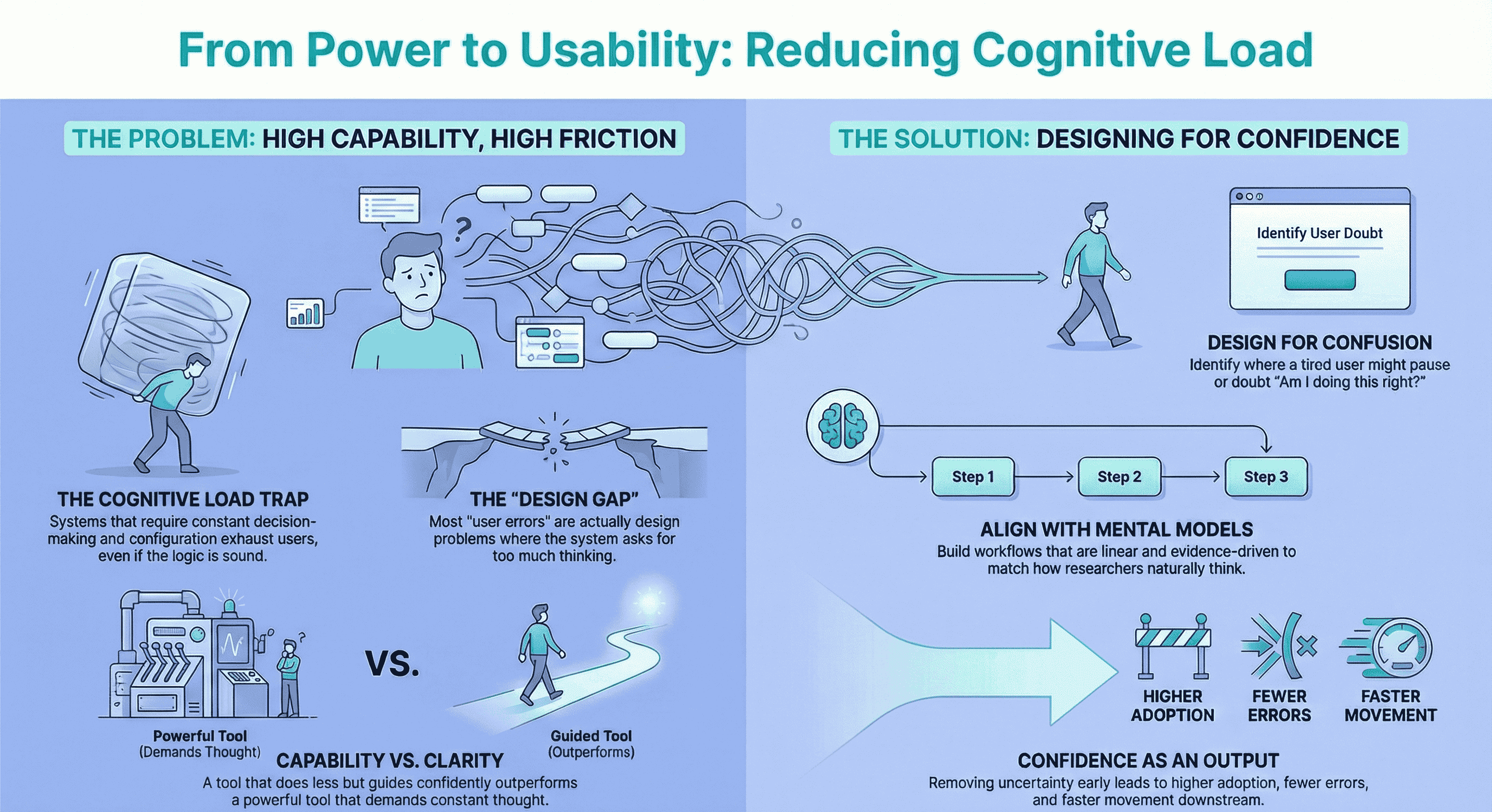

The problem was cognitive load.

When Capability Creates Friction

Cognitive load is one of those phrases that sounds abstract until you see it play out in real life.

It shows up when a system asks someone to make too many decisions while they’re already doing hard work. When every step requires judgement calls, configuration choices, or interpretation, even if each step is logical.

On paper, a system that can do everything sounds impressive. In practice, a system that constantly asks “What do you want to do next?” can be exhausting. I realised here that clarity beats capability.

A tool that does less, but guides someone confidently through that “less”, will always outperform a tool that does everything but demands constant thinking to use it.

Designing for How People Actually Think

This lesson helped my users more than it helped me.

Once I stopped designing around what the technology could do, and started designing around how researchers actually think, things changed quickly. Researchers want to feel confident using a tool, rather than feeling clever about it.

They tend to think in linear steps and be quite cautious. They want evidence, traceability, and reassurance that they’re doing things properly. So instead of asking users to bend to the system, I rebuilt workflows around their mental model.

What happened next wasn’t subtle. In fact, adoption went up, confidence increased and most importantly, errors went down. The same underlying technology suddenly felt easier because it had become clearer. If you’re new to our tools, the Quick Start Guide is built around that same idea: clarity first.

Designing Backwards from Confusion

The biggest change this lesson triggered was in how I approach design altogether.

I no longer start with the best-case scenario. I start with confusion.

I ask questions like:

- Where would a tired PhD student pause here?

- Where would someone quietly wonder, “Am I doing this right?”

- Where would a reviewer look at an output and feel unsure?

- Where would doubt creep in, even if the result is technically correct?

Those moments matter more than the moment of success.

If you remove uncertainty early, everything downstream becomes easier. That usually looks like: moving forward with confidence instead of hesitation and trusting the system because it feels aligned with how they think.

The Difference Between Skill Gaps and Thinking Gaps

One thing I’ve learned is that many problems we label as “user error” aren’t errors at all. They’re design problems.

If someone keeps getting stuck at the same point, it’s rarely because they lack skill. More often, it’s because they’re being asked to think too much at the wrong time.

Research is already demanding. It requires focus, judgement, and discipline. Adding unnecessary mental friction on top wears people down.

A good system absorbs complexity so the user doesn’t have to. That doesn’t mean dumbing things down. It means respecting how much cognitive effort the work already requires. For more on why systematic reviews are really about managing chaos—and how structure helps—that post gets into the same mindset.

The Question I’d Ask Others

If I had to pass one question on to others who learned a hard lesson in 2025, it would be: did the problem come from a lack of skill or from asking people to think too much while doing hard work?

It’s an uncomfortable question because it often points back to our own assumptions as builders, designers, or leaders.

We know the logic inside out. What feels obvious to us can feel heavy to someone meeting it for the first time, especially when they’re tired, under pressure, or trying to do careful, meaningful work. If you’re building or choosing tools for systematic reviews, that gap between “obvious to us” and “heavy for them” is exactly where usability wins or fails.

What I’m Carrying into 2026

I’m heading into the next year with a much stronger filter for decision-making.

If something adds choice, complexity, or uncertainty, it has to earn its place. If something reduces doubt, friction, or mental load, it’s worth protecting.

Power and accuracy still matters. But without clarity, they don’t land.

And experiences over 2025 have taught me that the best systems make people feel steady. For more on how we think about tools and workflows on this platform, that piece ties capability and clarity together.

That’s the standard I’m building towards now.

About the Author

Connect on LinkedInGeorge Burchell

George Burchell is a specialist in systematic literature reviews and scientific evidence synthesis with significant expertise in integrating advanced AI technologies and automation tools into the research process. With over four years of consulting and practical experience, he has developed and led multiple projects focused on accelerating and refining the workflow for systematic reviews within medical and scientific research.